Faster Collection Iterators

The ECMAScript 2015 Language Specification introduced collections into JavaScript, specifically Maps and Sets (among others like WeakMaps and WeakSets). These collections are iterable via the newly introduced iteration protocol, which means you can use them together with language constructs like for-of and spreads.

For example for Sets

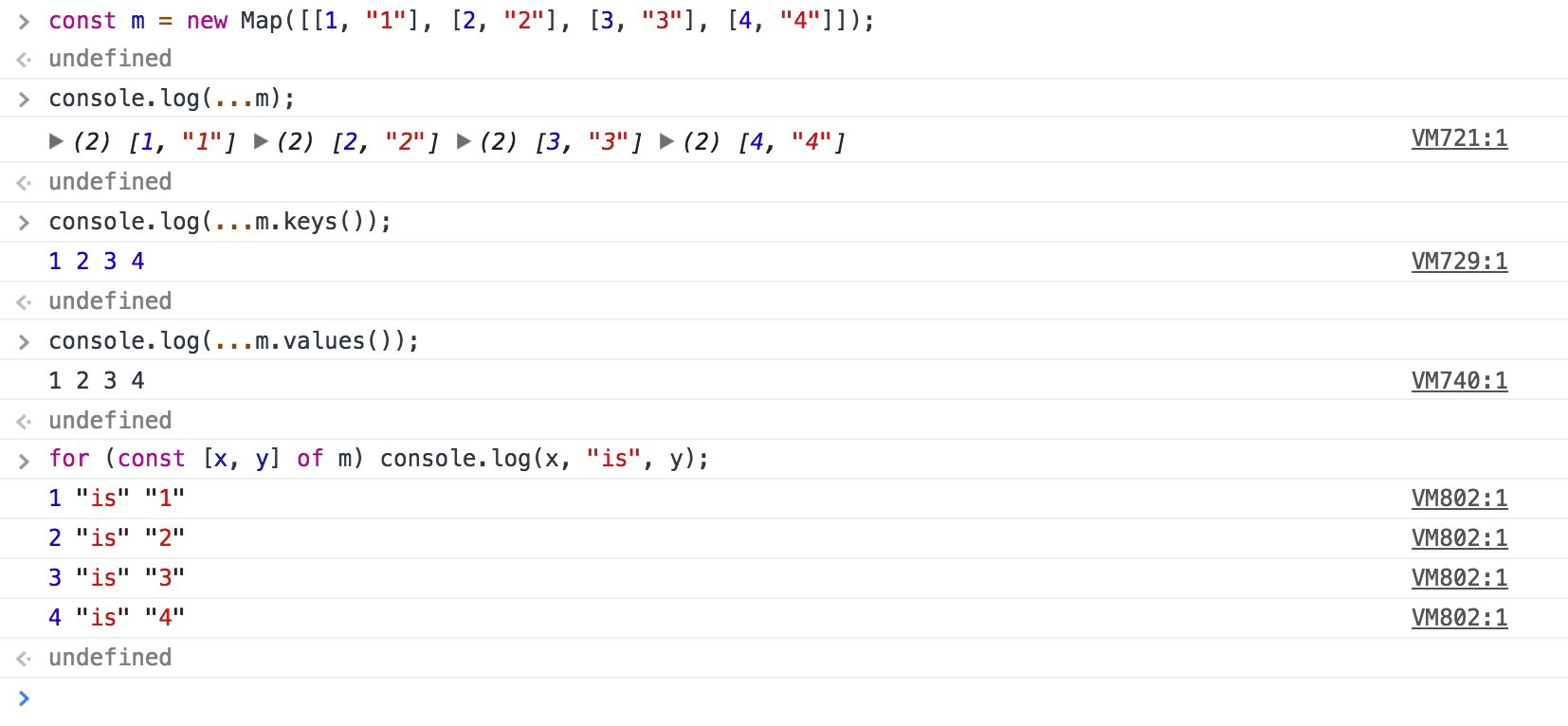

and similarly for Maps

demoing various kinds of iteration. This is defined and implemented by Map Iterator Objects and Set Iterator Objects.

Unfortunately these iterator objects - like the collections themselves - weren't really well optimized in V8 so far. In fact it was so bad, that Brian Terlson complained to me about it, because he's using Sets to implement a custom regular expression engine (for the ECMAScript specification). He (rightfully) noted that Chrome and Node don't have any reasonably fast way to iterate Sets. So it was about time to change that. In order to understand what's slow and why, it's important to understand how iteration works. This is easiest to understand by looking at a very simple for-of loop:

function sum(iterable) {

let x = 0;

for (const y of iterable) x += y;

return x;

}Running that through Babel we get the following code:

function sum(iterable) {

var x = 0;

var _iteratorNormalCompletion = true;

var _didIteratorError = false;

var _iteratorError = undefined;

try {

for (

var _iterator = iterable[Symbol.iterator](), _step;

!(_iteratorNormalCompletion = (_step = _iterator.next()).done);

_iteratorNormalCompletion = true

) {

var y = _step.value;

x += y;

}

} catch (err) {

_didIteratorError = true;

_iteratorError = err;

} finally {

try {

if (!_iteratorNormalCompletion && _iterator.return) {

_iterator.return();

}

} finally {

if (_didIteratorError) {

throw _iteratorError;

}

}

}

return x;

}If you've seen one of my recent talks about ES2015 and beyond, you'll already know that all modern JavaScript engines essentially perform the same desugaring that Babel does here to implement for-of (details vary).

Ignoring the (boring) exception handling, the core of the iteration boils down to this very simple loop:

function sum(iterable) {

var x = 0;

var iterator = iterable[Symbol.iterator]();

for (;;) {

var iterResult = iterator.next();

if (iterResult.done) break;

x += iterResult.value;

}

return x;

}Side note: There's a great blog post Iterables and iterators in ECMAScript 6 and a whole section Iterables and iterators in the book "Exploring ES6" by Axel Rauschmayer, which provides a lot of background on the theory and the use of iterators. I highly recommend reading those resources.

The key to great performance for iteration is to make sure that the repeated calls to iterator.next() in the loop are optimized well, and ideally completely avoid the allocation of the iterResult using advanced compiler techniques like store-load propagation, escape analysis and scalar replacement of aggregates. To really shine performance-wise, the optimizing compiler should also completely eliminate the allocation of the iterator itself - the iterable[Symbol.iterator]() call - and operate on the backing-store of the iterable directly. We did this for the Array and String iterators before, implemented by Caitlin Potter, the design document provides some details of the implementation. Essentially we could use the same ideas for the collection iterators, with some simplifications, as Maps and Sets are indeed simpler than Arrays.

The main reason why Set iterators were slow, was the fact that they were implemented via a mix of self-hosted JavaScript and C++ code. For example, the %SetIteratorPrototype%.next() was implemented like this.

function SetIteratorNextJS() {

if (!IS_SET_ITERATOR(this)) {

throw %make_type_error(kIncompatibleMethodReceiver,

'Set Iterator.prototype.next', this);

}

var value_array = [UNDEFINED, UNDEFINED];

var result = %_CreateIterResultObject(value_array, false);

switch (%SetIteratorNext(this, value_array)) {

case 0:

result.value = UNDEFINED;

result.done = true;

break;

case ITERATOR_KIND_VALUES:

result.value = value_array[0];

break;

case ITERATOR_KIND_ENTRIES:

value_array[1] = value_array[0];

break;

}

return result;

}What this code does is essentially the following:

- Allocate a new

value_arraywith two slots initialized toundefined. - Allocate the

resultobject as{value: value_array, done: false}. - Call into the C++

%SetIteratorNextruntime function, passing the iteratorthisand thevalue_arrayand letting it initializevalue_array[0]with the next key (if there's any). This looks a bit odd, but was done to haveMapandSetuniform (for better or worse...). - Dispatch depending on the return value of the C++ call and initialize the

resultappropriately.

So we definitely always allocated two objects, the value_array and the result, per iteration step, and there was no way for the optimizing compiler (neither TurboFan nor Crankshaft) to get rid of any of those. Even worse, every iteration step had to transition between JavaScript and C++ land. A rough visualization of how this works in case of the sum function would look like this:

In V8 the execution is always in one of two states (actually there are more, but that doesn't matter here), you're either executing C++ code or you're executing JavaScript. Transitioning between these two states is expensive. Going from JavaScript land to C++ land is accomplished via the so-called CEntryStub, which dispatches to a specific Runtime_Something function in C++ (the Runtime_SetIteratorNext function in this example). So avoiding these transitions and the allocations for the value_array and result objects whenever possible is the key to great performance.

The new implementation of %SetIteratorPrototype%.next() does exactly that. The baseline implementation (i.e. the code you execute before the calling function becomes hot and eventually gets optimized by TurboFan) is now fully implemented in JavaScript land, using our so-called CodeStubAssembler, and essentially only calls to C++ to handle garbage collection (when available memory is exhausted) or in case of an exception. The optimized implementation fully inlines the call to iterator.next() into the optimized code of the calling function, i.e. sum in the example above, and leveraging advanced compiler techniques it's able to avoid allocating both the iterator and the iterResult in case of common for-of loops.

Callback based iteration #

In addition to the iteration protocol, JavaScript also provides the Set.prototype.forEach and Map.prototype.forEach, which receive a callback that's invoked on all the items in the collection. Those were also implemented in C++ (and until very recently with some additional self-hosted JavaScript), making the baseline performance pretty bad, because not only do we need to transition from JavaScript to C++ land, but to handle the callback we need to transition back into JavaScript.

So just porting the baseline for the two forEach builtins to the CodeStubAssembler already allowed me to pick a couple of low-hanging fruits here. Fully optimizing and inlining the forEach builtins into TurboFan optimized code requires a bit more magic and is not yet implemented.

Performance Improvements #

Overall we improve the performance of Map and Set iteration by up to a factor of 11 from Chrome 60 to Chrome 61.

I used the following simple micro-benchmark to measure the iteration overhead:

const s = new Set();

const m = new Map();

for (let i = 0; i < 1e7; ++i) {

m.set(i, i);

s.add(i);

}

function SetForOf() {

for (const x of s) {

}

}

function SetForOfEntries() {

for (const x of s.entries()) {

}

}

function SetForEach() {

s.forEach(function(key, key, set) {});

}

function MapForOf() {

for (const x of m) {

}

}

function MapForOfKeys() {

for (const x of m.keys()) {

}

}

function MapForOfValues() {

for (const x of m.values()) {

}

}

function MapForEach() {

m.forEach(function(val, key, map) {});

}

const TESTS = [

SetForOf,

SetForOfEntries,

SetForEach,

MapForOf,

MapForOfKeys,

MapForOfValues,

MapForEach

];

// Warmup.

for (const test of TESTS) {

for (let i = 0; i < 10; ++i) test();

}

// Actual tests.

for (const test of TESTS) {

console.time(test.name);

test();

console.timeEnd(test.name);

}We still lose some performance, especially in case of entry iteration (both SetForOfEntries and MapForOf) because our escape analysis cannot yet eliminate the key-value array allocations, and we also still lose some performance in case of forEach since we don't have it working inside optimized code (i.e. inlined by TurboFan) at this point. But all of this is fixable and part of the long-term ES2015 and beyond performance plan.